Hello you out there!

I just started running the first serious test of the system I’ve developed during this year’s Google Summer of Code. If I wanted to put it in sensational words, the test could be called “Distribution of Particle Physics High Performance Computing Jobs among Multiple Computing Clouds”; just to get some readers :-). During the test, there will be some time I just sit around and watch my monitor, so I decided to share my experience about the new system with you and keep record of the test progress within this blog post.

Content (don’t worry, the sections are short):

- 0 Introduction

- 1 Preparation

- 2 VM startup

- 3 Session monitoring (number of Job Agents)

- 4 Job submission

- 5 Session monitoring (number of jobs)

- 6 Job monitoring

- 7 Job completion, output receipt

- 8 Examine output

- 9 VM shutdown

- 10 Appendix

0 Introduction

First of all, I’ve to introduce the system. This is the longest section.

It’s a job scheduling system supporting Virtual Machines (VMs) in multiple Infrastructure-as-a-Service computing clouds. Because of this, I found the name “Clobi”, which somehow comes from “cloud” and “combination”. If you know a better name, then please let me know ;-). Currently, the system is using and supporting Nimbus (and it’s prepared for Cumulus, Nimbus’ storage service) and Amazon Web Services (more precisely EC2, SQS, SimpleDB, S3).

It’s an “elastic” and “scalable” job system that can set up a huge computing resource pool almost instantly. VMs are added to or removed from the pool dynamically; based on need and demand. Jobs are submitted and processed using an asynchronous queueing system. An arbitrary number of clients is allowed to submit jobs to an existing resource pool. The basical realiability is inherited from the reliability of the core messaging components Amazon SQS (for queueing) and Amazon SimpleDB (for bookkeeping): to prevent data from being lost or becoming unavailable, it is stored redundantly and geographically dispersed across multiple datacenters.

Furthermore, the job system’s components are highly decoupled, which allows single components to fail or to get re-initialized without affecting the others.

The motivating application for this system is ATLAS Computing (for the ATLAS experiment at LHC, CERN (Geneva)): a common ATLAS Computing application (the so-called “full chain”) will be run during this test.

But: the basic system is totally generic and can be used in any case whenever it’s convenient to distribute jobs among different clouds. This is always the case when one tries to satisfy the basic computing power needs for a low price by e.g. operating an own Nimbus cloud, but wants to able to instantly balance out peaks of desired computing power by simply adding Amazon’s EC2 to the resource pool for a certain amout of time. By using Clobi, combining different clouds to one big resource pool becomes very easy.

These are the main components used during this test:

- a special ATLAS Software Virtual Machine image based on CernVM. I placed it on Nimbus Teraport Cloud and (as Amazon Machine Image) on S3 for EC2

- the Clobi Resource Manager (observing job queues, starting/killing VMs, …)

- the Clobi Job Agent (running on VMs, polling & running jobs, bookkeeping, …)

- the Clobi Job Management Interface (providing methods to submit / remove / kill / monitor / … jobs)

- a Ganga Clobi Backend (integrates Clobi into Ganga, which is “an easy-to-use frontend for job definition and management”)

The meaning of these components will become clearer in the following parts.

Let me show step by step — but only very roughly — how I use Clobi within the first serious test. Many details are left out, but you will get it in principle. After reading this blog post, you’ve an overview about what the system does and what I’ve actually done during the summer.

1 Preparation

I’ve prepared session/cloud configuration files. Using them, I started a new Clobi session (a resource pool) with the Clobi Resource Manager (It’s a Python application and I use it locally; here on my desktop machine). At first, it does much configuration and initialization stuff, including setting up SQS queues and SimpleDB domains. Interaction with Amazon Web Services is done via the boto module for Python. After initialization, the Resource Manager offers an interactive mode (it’s a multi-threaded console application with user interface, built using the urwid module for Python).

2 VM startup

Using the Resource Manager, I started one VM on EC2 and one on Nimbus Teraport Cloud, both based on “the special ATLAS Software VM”, containing ATLAS Software 15.2.0 and the Clobi Job Agent. Starting VMs manually is done with a very simple command. The driving forces in the background are boto in case of EC2 and the Nimbus cloud reference client, which I’ve wrapped and controlled via Python’s subprocess module. The main loop thread of the Resource Manager periodically polls the states of just started EC2 instances and the states of Nimbus client subprocesses to figure out if the instructed actions result in success or not.

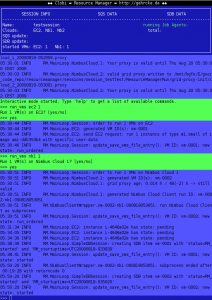

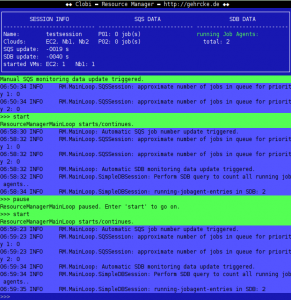

The following screenshot shows the Resource Manager in action (you basically see a terminal window). Follow a bit of the log. As you can see, it’s very easy to run VMs and — after a certain amout of time — the Resource Manager detects that both VMs have successfully started booting:

The EC2 VM needed around 10 minutes to start up, while the Nimbus VM needed 20 minutes. Reason: the AMI is ~10 GB big; the image on Nimbus ~20 GB (wasted space & time, but it’s just a test..).

You might say: “Only two VMs? Boring..”. I say: I could have taken several hundred. The point is: it would not make any difference, except in cost and in the amount of space used for log files. Clobi uses technology / is designed to always work reliably; even in different orders of magnitude. This is often called “scalable” or “elastic”. Basically, this positive characteristic is inherited from Amazon’s SQS, SDB and S3, which are used by Clobi to do management and control of the system.

3 Session monitoring (number of Job Agents)

I conceal almost all the details of how the components exchange information. But I’ve to tell the following to you, to not completely confuse you:

- the Resource Manager gave some bootstrap information to the VMs.

- the Job Agent is automatically invoked on VM operating system startup.

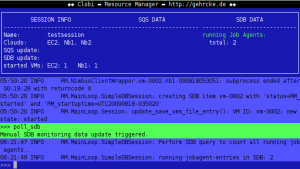

Using the bootstrap information, each VM’s Job Agent “registers with SimpleDB”. The Resource Manager has a monitoring functionality to check SimpleDB for running Job Agents:

Voilà, now it’s definitely known that both VMs successfully started their Job Agent’s. These start in a “watching/lurking” state, periodically polling SQS for jobs.

4 Job submission

The SimpleDB / SQS / S3 data structure together with Clobi’s Job Management Interface allows to submit jobs with different priorities, to remove jobs, to kill running jobs and to monitor jobs. Furthermore, transmission and receipt of an input/output sandbox archive is possible. This is needed to deliver executables and small input data and to receive small output data as well as stdout/err and other logs.

I’ve downloaded Ganga and installed it to my local machine. Then, I’ve started developing a new “Clobi backend” to integrate Clobi’s Job Management Interface into Ganga. Using this new backend, it’s possible to submit/kill/monitor/.. jobs right away from the Ganga interface, using Ganga’s common job description and management commands.

To test the system, I’ve prepared some shellscripts that invoke running “The Full Chain” on the worker nodes. This is a very good test to validate the whole system: it needs some very small input files, only works if the ATLAS Software was set up properly (uoooh.. not trivial!), stresses the VM (the simulation step consumes much CPU power) and leaves some small output files for the output sandbox.

At Ganga startup, I provided a configuration file containing few but essential information about the Clobi session that I’ve set up before via Resource Manager. From this configuration file Ganga’s Clobi backend e.g. knows which SimpleDB domain to query and to which SQS queues the job messages must be submitted. Using this bootstrap information, an arbitrary number of Gangas could be used from anywhere to submit and manage jobs within this special Clobi session.

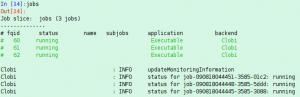

I will now submit the same job (the “full chain” thing) three times: the Nimbus VM has two virtual cores and its Job Agent will try to receive and run two jobs at the same time. The EC2 VM (m1.small) only has one virtual core. Hence, three jobs are needed to use the VMs to full capacity ;). This is a screenshot from the Ganga terminal session where I submitted the jobs:

The Clobi backend successfully did its job: it created three Clobi job IDs, submitted three SQS messages and uploaded three input sandbox archives.

5 Session monitoring (number of jobs)

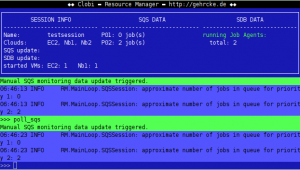

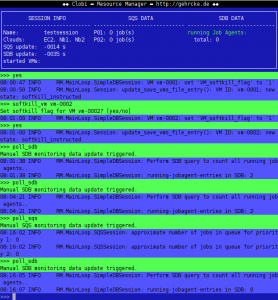

The Resource Manager is able to observe the queues and to determine the number of jobs submitted to them. It recognizes two jobs in the queue for priority 2:

Only two? Maybe one Job Agent polled a job right away after submission, or maybe the SQS measurement was not exact (this is possible, too). Anyway, few time later there is only one job left in the queues and then they are empty. This means that the Job Agent on the Nimbus VM successfully grabbed two jobs and the EC2 VM grabbed one:

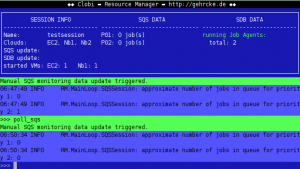

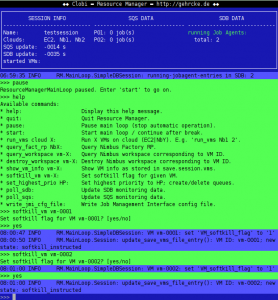

While me and Ganga are waiting for the jobs to finish (this takes some time and Ganga periodically polls the state of the jobs via Clobi backend / Clobi Job Management Interface), I use the time to advise you of an important fact: it’s the objective to automate the observe-queues-and-start/kill-VMs-as-required process in the future. The current Resource Manager is very prepared for this. Let me show the monitoring loop to you:

It observes the number of jobs in the queues and the number of running Job Agents periodically. Based on this information the Resource Manager easily could start / kill virtual machines (I’ve already demonstrated how easy starting is; killing is described later). I did not implement this algorithm until now, because a) I had no time and b) I could have done it quick and dirty, but I really did not need this feature to develop and test the rest of the system. But this feature will come, because if it’s implemented properly with intelligent policies, it’s just great.

6 Job monitoring

As I’ve already mentioned, Ganga periodically checks the jobs’ states. Therefore, the Ganga Clobi backend provides a special method that Ganga calls from time to time from one of its monitoring threads. Normally this happens quietly, but I’ve put some debug output into this method. Let’s check it out:

7 Job completion, output receipt

After some more time, Ganga discovered that one of the three jobs finished successfully. This means that the Job Agent detected a returncode of 0 of the job shellscript and could successfully store the output sandbox archive to S3. At this point, the Clobi backend triggers to download and extract the output sandbox archive. This looks like:

Clobi : INFO status for job-090818044445-3585-3088: completed_success Ganga.GPIDev.Lib.Job : INFO job 60 status changed to "completed" Clobi : INFO download atlassessions/0907210728-testsess-0c7e/jobs/out_sndbx_job-090818044445-3585-3088.tar.bz2 from S3 Clobi : INFO store key 0907210728-testsess-0c7e/jobs/out_sndbx_job-090818044445-3585-3088.tar.bz2 as file /home/gurke/gangadir/workspace/gurke/LocalAMGA/60/output/out_sndbx_job-090818044445-3585-3088.tar.bz2 from bucket atlassessions Clobi : INFO Download of output sandbox archive successfull.

Did you have doubts that this is my first serious test and everything worked until now? Some parts of the system are already tested very much, of course. But the Clobi backend for Ganga made the transition from vitally-important-features-missing to just-scratch-along-usability only a few hours ago. I’m really very happy that everything worked until now, but the output sandbox archive extraction could be improved:

Clobi : CRITICAL Error while extracting output sandbox

Clobi : CRITICAL Traceback:

Traceback (most recent call last):

File "/mnt/hgfs/E/gsoc_code_repo/ganga_clobi_backend/Clobi/Clobi.py", line 243, in clobi_dl_extrct_outsandbox_arc

sp = subprocess.Popen(

NameError: global name 'subprocess' is not definedYoooah, I (want to) use Python’s subprocess module to extract the tar.bz2 archive with system’s tar, but I forgot to import subprocess :-(. This is forgotten and fixed easily :-). Btw: of course I got three downloaded output archives and three extraction errors ;).

8 Examine output

The output sandbox archive files were stored on my local machine by Ganga’s Clobi backend. I take a look into one of it by extracting it manually:

$ ls out_sndbx_job-090818044451-3585-01c2.tar.bz2 $ tar xjf out_sndbx_job-090818044451-3585-01c2.tar.bz2 $ ls AOD_007410_00001.pool.root evgen.log joblog_job-090818044451-3585-01c2 recoAOD.log EVGEN_007410_00001.pool.root jobagent_log out_sndbx_job-090818044451-3585-01c2.tar.bz2

Great! The AOD file is there. This means that 1) Clobi did perfect work to control and manage the job and 2) the ATLAS Computing part (“The Full Chain”) worked perfectly:

- the interaction between a certain particle (which was defined within the input sandbox) and the ATLAS detector was successfully simulated.

- the ATLAS detector output (basically times and voltages) was calculated successfully.

- particle tracks and energy deposits were successfully reconstructed from times and voltages.

- an event summary was successfully built from tracks and energy deposits.

“The summary” is saved within the AOD file, which successfully returned to my local machine. Cool. Every single part of the system worked as it should (psss, don’t think of the extraction…).

9 VM shutdown

You perhaps asked yourself how to dynamically kill VMs. Besides the “hard kill” (invoking Nimbus/EC2 API calls to shut down a VM), I’ve implemented a mechanism that I call “soft kill”: the Resource Manager sets the “soft kill flag” for a specific VM (in SimpleDB) and the corresponding Job Agent checks it from time to time. When it is set, it waits until all currently running jobs are done and then the Job Agent shuts down the VM. Let’s watch it in action (I had to look up the command of my own application, too few sleep recently!):

After waiting some time we see the number of running Job Agents decrease to zero..

10 Appendix

If you are very interested, you can find some additional material:

- My earlier work on this topic (from last year), “Amazon Web Services for ATLAS Computing” (AWSAC) can be found here: http://gehrcke.de/awsac.

- The aboriginal GSoC project description can be found here.

- I’ve already written some blog posts about this project during Google Summer of Code.

A detailled, up-to-date and exact description of the system (“Clobi“) is planned for the future.

I will need the last days of GSoC to implement some missing and important features, to search and fix bugs and to clean everything up to make it presentable. I will definitely work on this project after GSoC (as the time allows it, of course). Currently I think about pushing the project to either bitbucket or Google code. Both support mercurial repositories and this is what I used for my code until now (locally).

If you like this, spread it! Every question and/or comment is much appreciated!

Thanks for listening :-)

Leave a Reply